Study maps developer backlash against AI slop

Reddit and Hacker News posts describe review overload and open-source trust decay, cheap code shifts costs to maintainers

Images

Image description

the-decoder.com

Image description

the-decoder.com

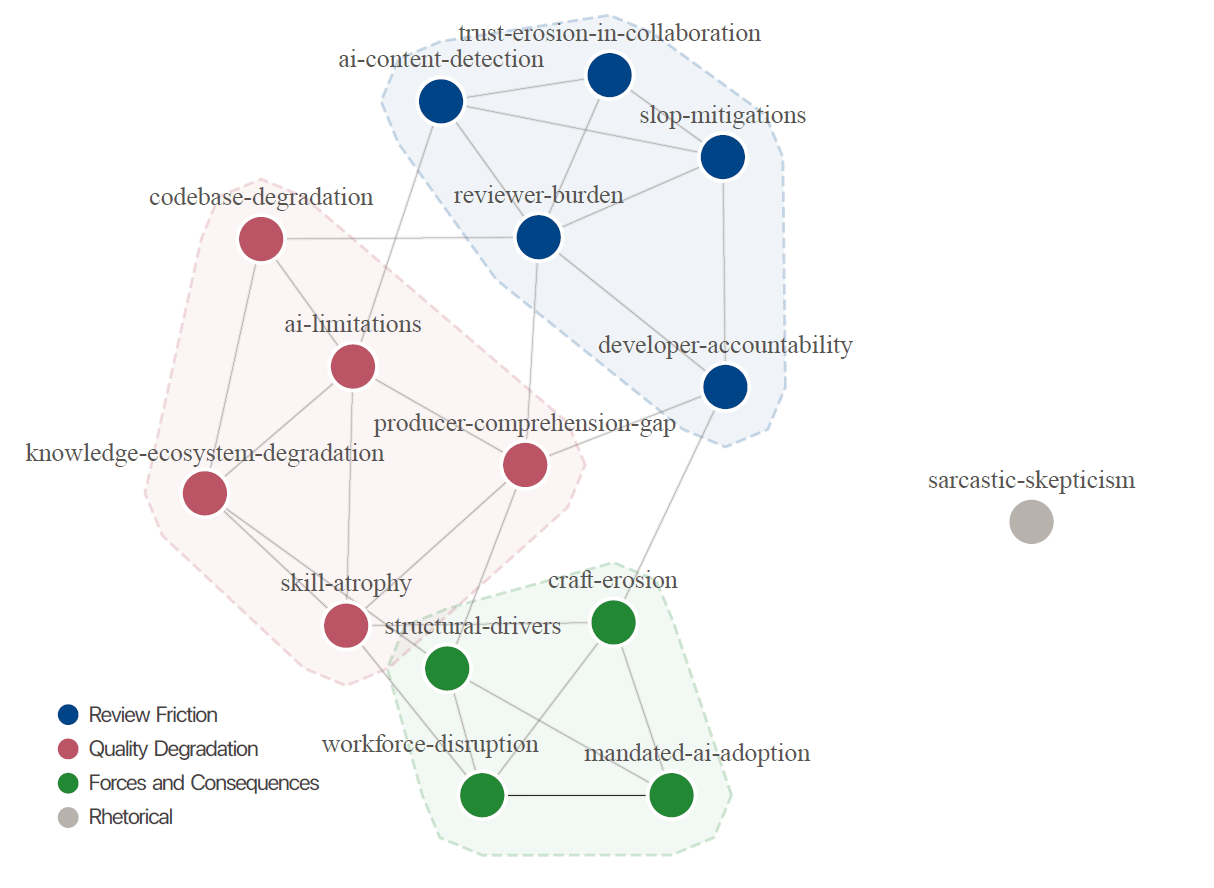

The study's 15 codes and their relationships, grouped into three thematic clusters: Review Friction (blue), Quality Degradation (pink), and Forces and Consequences (green). The isolated gray node "sarcastic-skepticism" runs as a rhetorical pattern across all topics. | Image: Baltes, Cheong, Treude (2026)

Baltes, Cheong, Treude (2026)

The study's 15 codes and their relationships, grouped into three thematic clusters: Review Friction (blue), Quality Degradation (pink), and Forces and Consequences (green). The isolated gray node "sarcastic-skepticism" runs as a rhetorical pattern across all topics. | Image: Baltes, Cheong, Treude (2026)

Baltes, Cheong, Treude (2026)

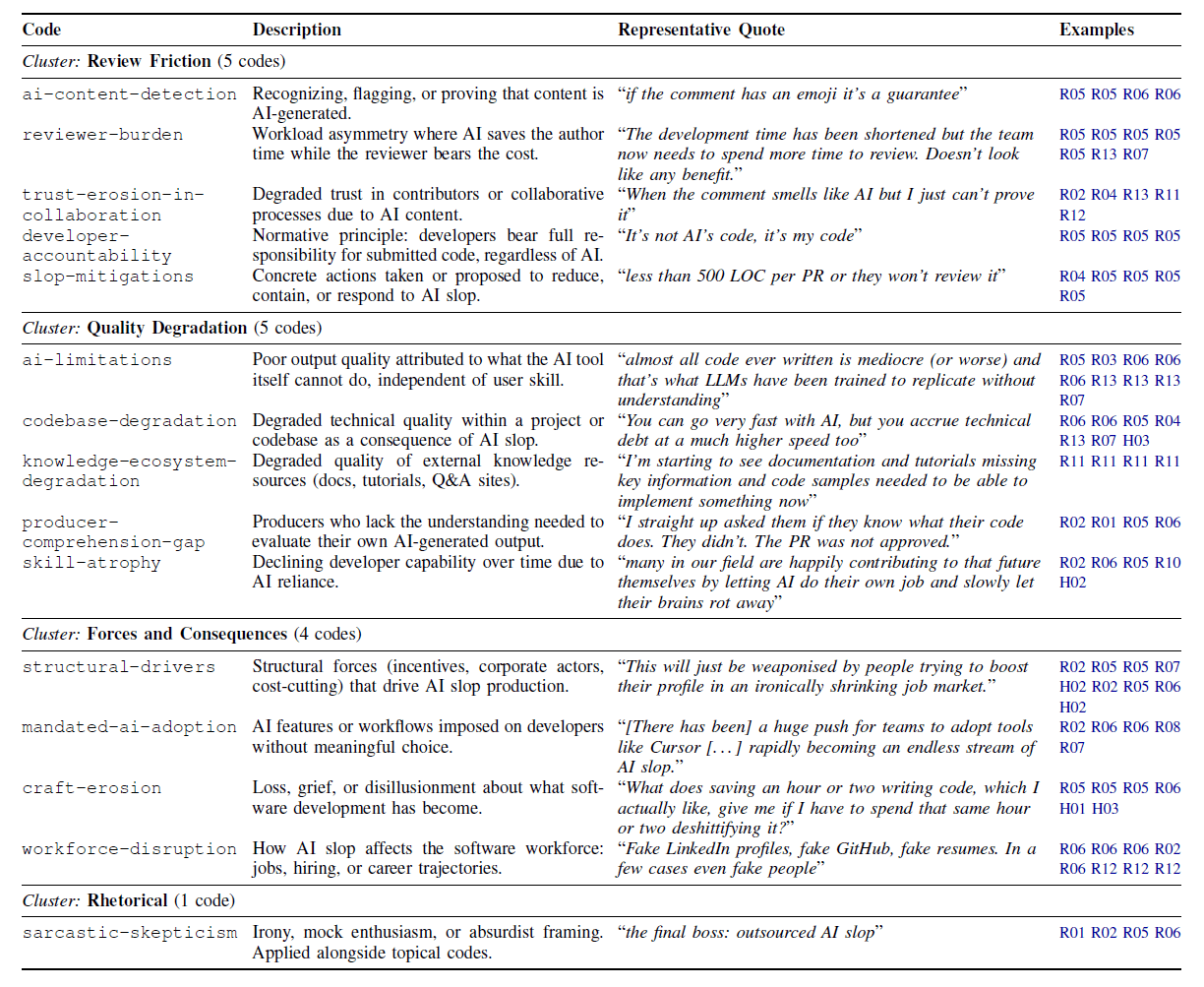

The full codebook with 15 categories, their descriptions, and representative quotes from the analyzed developer discussions. | Image: Baltes, Cheong, Treude (2026)

Baltes, Cheong, Treude (2026)

The full codebook with 15 categories, their descriptions, and representative quotes from the analyzed developer discussions. | Image: Baltes, Cheong, Treude (2026)

Baltes, Cheong, Treude (2026)

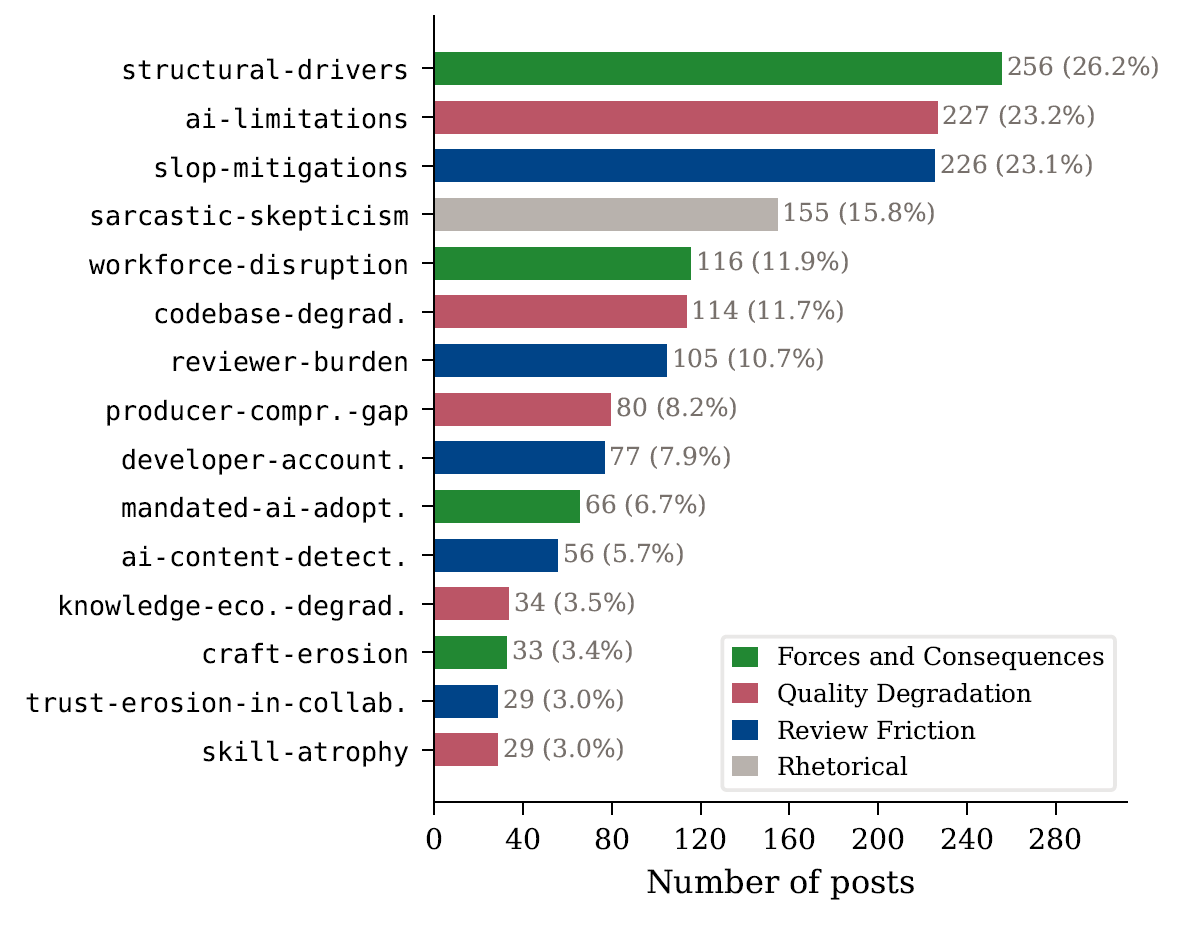

Structural drivers, AI limitations, and countermeasures dominate the discussion. Sarcastic skepticism is the fourth most common code at 15.8 percent. | Image: Baltes, Cheong, Treude (2026)

Baltes, Cheong, Treude (2026)

Structural drivers, AI limitations, and countermeasures dominate the discussion. Sarcastic skepticism is the fourth most common code at 15.8 percent. | Image: Baltes, Cheong, Treude (2026)

Baltes, Cheong, Treude (2026)

A new qualitative study mapping developer complaints about “AI slop” argues that low-quality AI-generated code is shifting costs onto reviewers and open-source maintainers, according to The Decoder. Researchers from Heidelberg University, the University of Melbourne and Singapore Management University analysed 1,154 posts across 15 threads on Reddit and Hacker News where participants explicitly discussed “AI slop,” then organised recurring arguments into a codebook spanning review friction, quality degradation, and downstream consequences.

The study is careful about what it is—and is not. Because the dataset was built by searching for threads containing the term “AI slop,” it largely captures a self-selecting group of critics rather than a representative sample of developers. That bias matters: it means the paper is best read as a structured inventory of complaints that are already circulating, not as a measurement of how common those complaints are.

Still, the pattern it describes matches what many maintainers say privately: the person who pastes AI output gets the time savings, while the person who has to merge, maintain and debug inherits the risk. The Decoder highlights a central grievance that AI assistance can shorten initial development but lengthen review, turning reviewers into de facto “prompt engineers” who must infer intent from generic code. In open-source projects, where review labour is often unpaid, that imbalance is more than an annoyance—it is a throughput constraint.

The paper links the complaints to concrete examples already visible in the ecosystem. The Decoder notes that the curl project shut down its bug bounty program after being flooded with AI-generated vulnerability reports that consumed maintainer time without yielding valid findings. Similar issues have been reported by Apache Log4j 2 and the Godot game engine, where maintainers and security teams face a rising volume of low-signal submissions. The result is a predictable shift in behaviour: tighter contribution rules, higher friction for newcomers, and more aggressive filtering—measures that protect maintainer time but can also reduce the openness that made these projects valuable.

The study also describes workplace spillovers. Developers reported AI workflows being mandated by management, including executives copying AI output into responses to technical problems. That dynamic does not require malice; it only requires a budget holder who can claim productivity gains while pushing the verification burden onto engineers who cannot afford to be wrong.

The immediate novelty here is not that AI-generated code can be messy, but that the mess scales. As tools make output cheaper, the scarce resource becomes review attention—and the projects that cannot price that attention will be the first to ration it.