Zhipu AI ships GLM-5V-Turbo design-to-code model

Multimodal system turns mockups into runnable front-end projects, the bottleneck moves from UI implementation to verification and deployment

Images

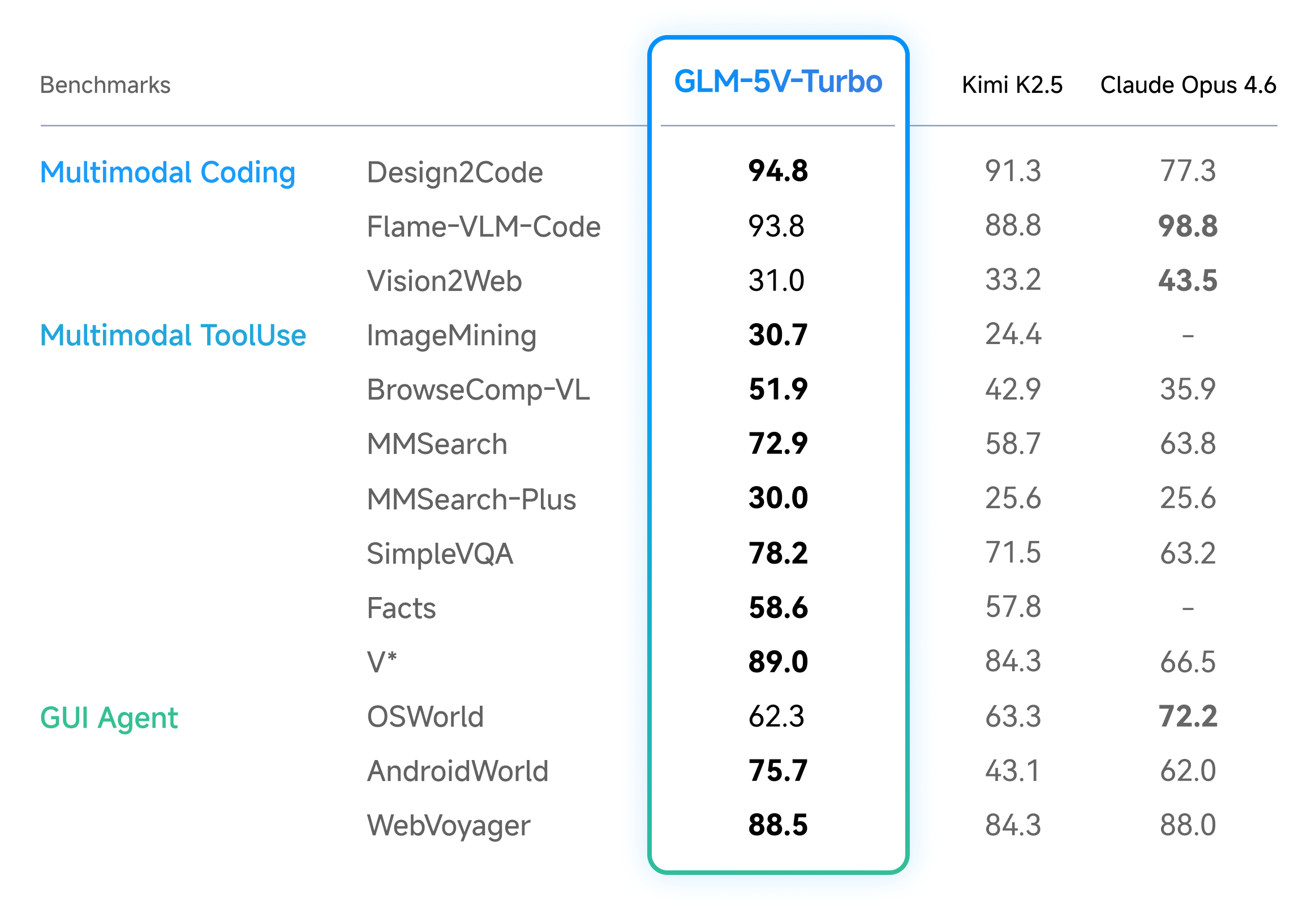

Z.AI says GLM-5V-Turbo leads in most multimodal coding and tool usage categories. Claude Opus 4.6 pulls ahead in a few benchmarks like Flame-VLM-Code and OSWorld. | Image: Z.AI

Z.AI

Z.AI says GLM-5V-Turbo leads in most multimodal coding and tool usage categories. Claude Opus 4.6 pulls ahead in a few benchmarks like Flame-VLM-Code and OSWorld. | Image: Z.AI

Z.AI

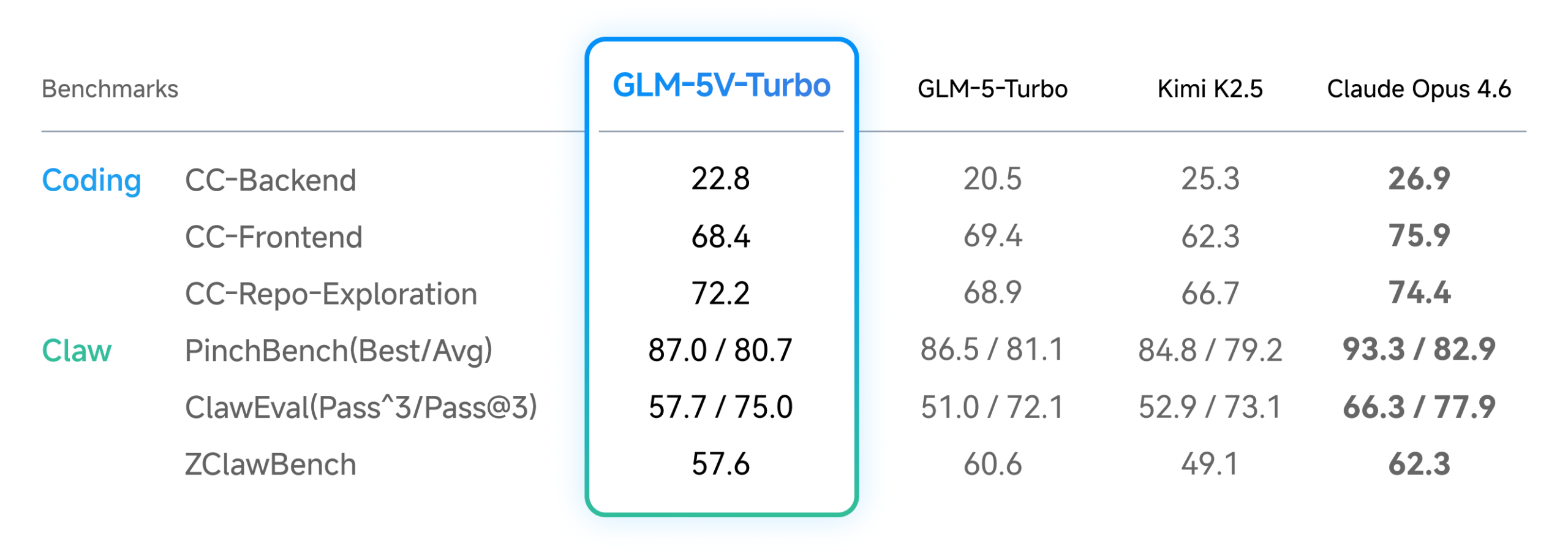

In text-only coding and agent benchmarks, Claude Opus 4.6 leads overall, but GLM-5V-Turbo outperforms its own text model GLM-5-Turbo and Kimi K2.5 in several categories. | Image: Z.AI

Z.AI

In text-only coding and agent benchmarks, Claude Opus 4.6 leads overall, but GLM-5V-Turbo outperforms its own text model GLM-5-Turbo and Kimi K2.5 in several categories. | Image: Z.AI

Z.AI

Zhipu AI says its new GLM-5V-Turbo model can take a design mockup and output a runnable front-end project, collapsing what is often days of UI implementation into a single step. According to The Decoder, the multimodal model ingests images, video and text, supports a 200,000-token context window, and is designed for “agent workflows” that run a loop of perceiving an interface, planning actions and executing code.

If the claim holds up in independent testing, “design-to-code” stops being a boutique capability inside a few well-run teams and becomes a commodity feature that can be bought by the API call. That shifts bargaining power away from the people who translate pixels into components—agency teams, junior developers, and the long tail of contractors who bill for incremental UI work—and toward the platforms that sit upstream and downstream: design tools that control the source of truth (Figma-like workflows, asset libraries, design systems) and the CI/CD and hosting layers that decide what actually ships.

The immediate second-order effect is not that front-end work disappears, but that it changes shape. When a model can generate plausible React/Vue code from a screenshot, the scarce skill becomes specifying constraints, enforcing consistency, and catching edge cases before they become production incidents. Teams that already have strict component libraries, linting, visual regression tests, and accessibility gates will be able to treat model output as a draft. Teams without those guardrails will get “working” UIs whose logic is brittle, whose state management is improvised, and whose behavior diverges from the design intent in the hard-to-notice corners—keyboard navigation, error states, localization, and cross-browser quirks.

That is where the new gatekeepers emerge. A design-to-code model can output 10,000 lines of front-end quickly; only a disciplined pipeline can reject it quickly. The more output is automated, the more value accrues to test harnesses, secure dependency management, and review tooling that can say “no” at machine speed. In practice, that means larger organizations and platforms with compliance budgets and mature build systems can absorb the productivity shock earlier, while smaller shops risk shipping unreviewed code because the whole point was to move fast.

There is also a quieter liability question embedded in the workflow. GLM-5V-Turbo is trained on large-scale multimodal data; The Decoder notes Zhipu AI describes a “controllable and verifiable data system” to address agent-training data scarcity, but does not detail provenance. Design-to-code outputs can inadvertently reproduce patterns, snippets, or component structures that look like ordinary UI but originate in licensed templates or proprietary codebases. When the output is “just UI,” teams tend to treat it as low-risk. The legal and security exposure arrives later, when the generated project becomes the foundation for a product.

Zhipu AI’s benchmark claims—strong results on GUI-agent tests such as WebVoyager and AndroidWorld, and no reported drop on text-only coding benchmarks—are, for now, self-reported. But the direction of travel is clear: the business of front-end implementation is being pulled into the same funnel as code completion and code review, where the work becomes cheaper, faster, and easier to audit—provided someone pays for the audit.

GLM-5V-Turbo turns a mockup into a runnable front-end project, Zhipu AI pushes design-to-code multimodal agents, quality control shifts from UI builders to CI pipelines