Nvidia CaP-X finds frontier models fail at robot control without abstractions

Agentic scaffolding and test-time compute bring performance near human code, reliability shifts from model size to system design

Images

Image description

the-decoder.com

Image description

the-decoder.com

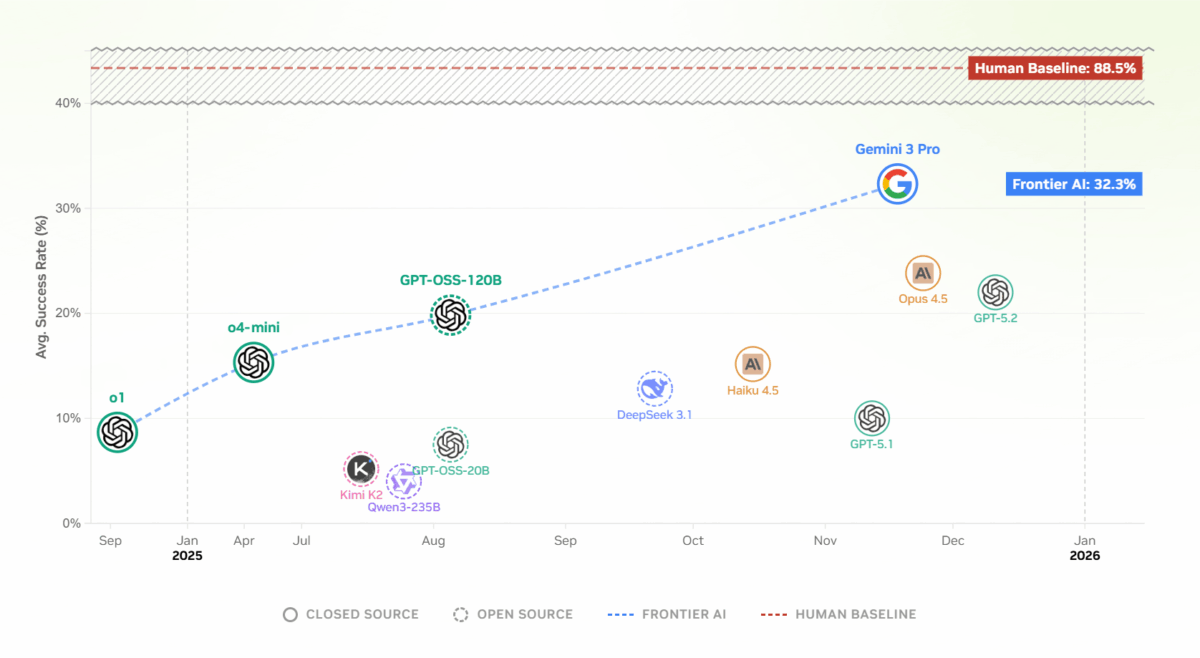

Even the strongest models tested fail at most robotics tasks when working without high-level abstractions.

the-decoder.com

Even the strongest models tested fail at most robotics tasks when working without high-level abstractions.

the-decoder.com

CaP-X puts a number on a robotics problem that has mostly been argued about in anecdotes: twelve frontier AI models can write plausible-looking robot-control code, but they cannot reliably make real machines do what the code intends on the first try.

According to The Decoder, researchers from Nvidia, UC Berkeley, Stanford and Carnegie Mellon built an evaluation framework called CaP-X that asks language models to generate programs for seven manipulation tasks, from lifting a cube to bimanual coordination. The premise is deliberately simple: instead of training a robot policy end-to-end on large motion datasets, let a general-purpose model write the control logic. In practice, the study finds, performance depends less on model “intelligence” in the abstract than on what software scaffolding the model is allowed to stand on.

The strongest models in the benchmark—named in the report as Gemini-3-Pro, GPT-5.2 and Claude Opus 4.5—do far better when they are handed high-level, human-designed commands such as “grasp object X and lift it.” Strip those abstractions away and require the model to assemble the pipeline from lower-level components—image segmentation, depth estimation, grasp planning, coordinate transforms, inverse kinematics—and success rates collapse. A single missing transform or a wrong frame convention is enough to turn a correct-looking program into a robot that reaches, collides, or freezes.

One of the more counterintuitive results is that feeding raw camera images directly into multimodal models makes things worse. The researchers attribute this to a practical alignment gap: these systems are rarely trained to reason simultaneously about code correctness and the physical consequences of executing that code on hardware. CaP-X instead inserts a “Visual Differencing Module,” where a separate vision-language model turns the scene into structured text and, after each attempt, describes what changed and whether the task is complete. Textual state summaries outperform both console logs and direct image inputs.

The paper’s proposed fix is not a new robot model but a workflow. The team’s “CaP-Agent0” system layers three mechanisms on top of existing models: iterative perception-to-text feedback via visual differencing; an automatically built library of helper functions harvested from successful runs; and parallel solution generation, with nine candidate programs produced and then combined by a supervisory agent. With that scaffolding, the researchers report that a training-free agent can approach human-level performance on the benchmark.

The immediate implication is that robot autonomy is becoming a systems engineering and safety problem rather than a straight race for larger models. The failure modes are not exotic: brittle dependencies, hidden assumptions in abstractions, and the difficulty of validating code that interacts with the physical world. The more capability is moved into orchestration—tool calls, hierarchical planning, guardrails, retries—the more the critical question becomes who audits the scaffolding, not which model wrote the first draft.

In CaP-X, the models do not become reliable by seeing more pixels or writing longer code. They become reliable when the task is broken into pieces that can be checked, rerun and gradually reused.