UK ad watchdog bans PixVideo AI advert

ASA says campaign implied non-consensual undressing despite app safeguards, distribution rules become AI regulation by proxy

Images

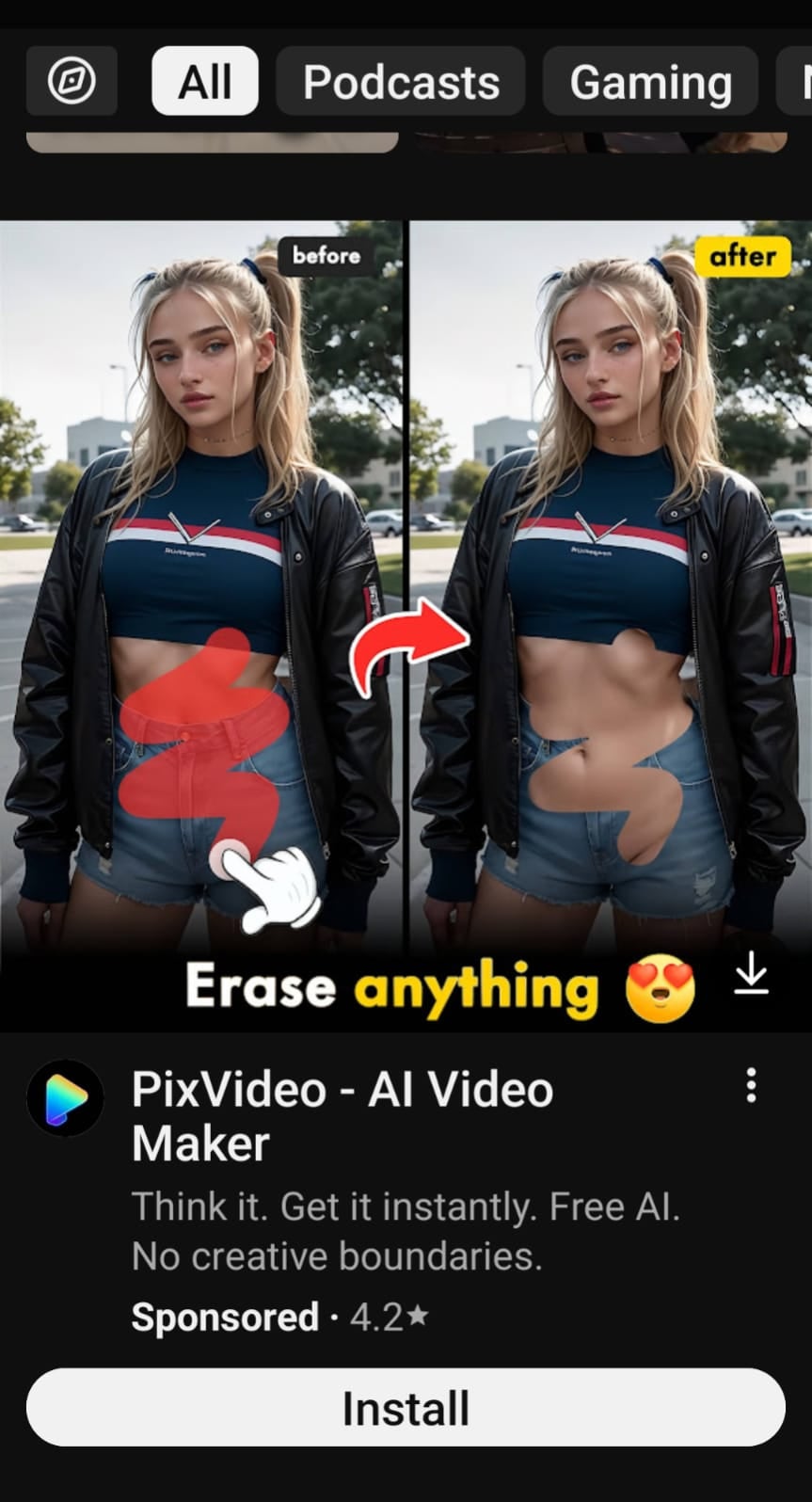

A photo issued by the Advertising Standards Authority (ASA) of an advert for PixVideo, which condoned digitally altering and exposing women (ASA/PA Wire)

ASA/PA Wire

A photo issued by the Advertising Standards Authority (ASA) of an advert for PixVideo, which condoned digitally altering and exposing women (ASA/PA Wire)

ASA/PA Wire

Britain’s Advertising Standards Authority has banned a YouTube advert for PixVideo – AI Video Maker after complaints that it implied users could remove a woman’s clothing. The Independent reports the ad showed a “before” image with red scribbles over a woman’s midriff and an “after” image with exposed skin, alongside the text “Erase anything,” prompting eight complaints.

Saeta Tech Ltd, which trades as PixVideo, told the regulator the ad was likely to cause serious offence, but said the concern was the presentation rather than the product. The company said its terms prohibit nude or sexually explicit content and that automated detection blocks exposed imagery; it also argued the app was not designed to enable removing clothing. The ASA accepted that the app did not permit users to create nude or sexually explicit content, but ruled the ad nonetheless objectified and sexualised the woman and appeared to condone digitally altering and exposing women’s bodies without consent.

The decision is a small case with a familiar pattern: regulators often cannot audit a model’s capabilities directly, but they can police the chokepoints around it—advertising, app distribution, and brand messaging. That changes how AI products are marketed. Even if a tool’s actual functionality is constrained, suggesting a controversial use case in an ad can become the enforceable offense. For developers, the practical lesson is that “what the model can do” is less important than “what the ad implies it can do,” because the latter is legible to regulators.

Over time, this kind of enforcement tends to reward firms that can afford compliance review, pre-clear marketing, and maintain documented content-moderation claims. Smaller teams, or fast-moving consumer AI apps, face higher risk from a single aggressive campaign. The ASA’s ruling also shows how governance can migrate: instead of regulating generative AI systems directly, authorities regulate the public-facing wrapper and let distribution platforms do the rest.

The banned material was not a model card or a technical demo. It was a single image pair in a YouTube ad, and that was enough to trigger a formal prohibition.