Workers say they are training AI to replace them

The Guardian reports job tasks turned into model data under existing pay terms, Fees fall while error correction work rises

Images

Illustration: Guardian Design/Getty Images

theguardian.com

Illustration: Guardian Design/Getty Images

theguardian.com

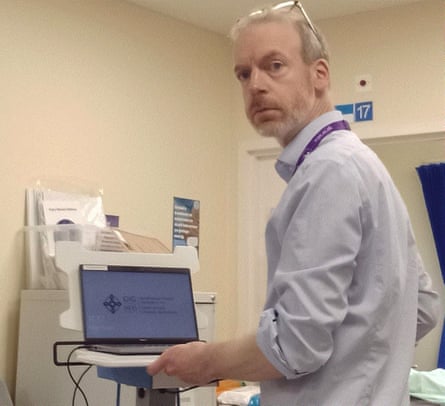

Mark Taubert, a palliative care consultant and professor, says he does not feel as though his role is threatened by AI. Photograph: Handout

theguardian.com

Mark Taubert, a palliative care consultant and professor, says he does not feel as though his role is threatened by AI. Photograph: Handout

theguardian.com

Workers asked to help deploy artificial intelligence inside their own workplaces describe a new kind of labour dispute: the job is not being outsourced to another country, but to a model trained on their own output.

In a reported feature for The Guardian, Jane Clinton describes an academic editor in the UK who was recruited to help train “assistant editors” and only later learned the assistants were an AI system. The editor, identified as Christie, says she spent months correcting odd errors—unnecessary punctuation and nonsensical substitutions—before a company newsletter disclosed the pre-editing would be automated and her fee cut. “I now earn less money for correcting the mistakes of an AI, which takes me longer than editing from scratch,” she tells the paper.

The pattern is straightforward. Management gets the upside of lower unit costs, faster throughput, and a résumé line about “AI transformation”. The worker supplies the scarce ingredient—domain judgment and edge-case knowledge—and is paid on the old schedule, even as the work product becomes training data that reduces the future need for that worker. The cost of experimentation is pushed downward: staff absorb the time spent cleaning machine output, and customers absorb the quality variance until the system stabilises.

Clinton’s reporting suggests this is already happening in white-collar tasks where the input is text and the output can be checked quickly: editing, customer responses, internal documentation, triage. In healthcare, the same dynamic is present but constrained by risk. A palliative care consultant, Mark Taubert at Velindre University NHS Trust in Cardiff, describes recording hours of material and feeding guidelines into a pilot chatbot intended to answer patients’ out-of-hours questions. The system, he says, was “about 50% spot on” but struggled with misspellings, dialect and patients using the wrong drug names—precisely the real-world messiness that makes clinical communication hard to automate.

That gap—between polished demos and messy reality—is where the bargaining problem sits. If a worker is asked to “help the tool learn”, the worker is not just doing today’s job; they are transferring know-how into a reusable asset the employer controls. In most firms, the default contract language treats that transfer as free.

Private countermeasures exist, and they look less like traditional wage fights and more like data and process terms. Employment contracts can specify whether an employee’s work product may be used for model training, whether it can be shared with third-party vendors, and whether the employee is entitled to a premium when their output is used to build automation that reduces headcount. Teams can demand audit rights: what data is captured, where it is stored, and which systems it is used to train. Where collective bargaining is present, the most valuable clause may not be a percentage pay rise but a rule that automation projects require explicit consent, compensation schedules, and a documented error budget—so the cost of mistakes is not quietly assigned to the people doing the cleanup.

Clinton’s article ends with workers still unsure whether the technology will fully replace them. But in the editor’s case, one concrete change has already happened: the AI arrived first, and the pay cut followed after the training was done.