OpenAI flags violent ChatGPT use but declines police referral in Canada shooting case

Abuse detection yields ambiguous signals and high false-positive stakes, AI safety quietly drifts toward privatized pre-crime

Images

(Royal Canadian Mounted Police/AF)

Royal Canadian Mounted Police/AF

(Royal Canadian Mounted Police/AF)

Royal Canadian Mounted Police/AF

OpenAI faces lawsuit over ChatGPT triggering user's 'delusional spiral'

foxnews.com

OpenAI faces lawsuit over ChatGPT triggering user's 'delusional spiral'

foxnews.com

Police tape surrounds the Tumbler Ridge Secondary School and other buildings in Tumbler Ridge, B.C. on Wednesday, Feb. 11, 2026. (Jesse Boily /The Canadian Press via AP)

foxnews.com

Police tape surrounds the Tumbler Ridge Secondary School and other buildings in Tumbler Ridge, B.C. on Wednesday, Feb. 11, 2026. (Jesse Boily /The Canadian Press via AP)

foxnews.com

Students exit the Tumbler Ridge school after deadly shootings, in British Columbia, Canada, Tuesday Feb. 10, 2026. (Jordon Kosik via AP)

foxnews.com

Students exit the Tumbler Ridge school after deadly shootings, in British Columbia, Canada, Tuesday Feb. 10, 2026. (Jordon Kosik via AP)

foxnews.com

OpenAI says it considered alerting Canadian law enforcement in 2025 after internal systems flagged a user account for the “furtherance of violent activities,” but ultimately chose not to refer the case because it did not meet the company’s threshold of an “imminent and credible risk of serious physical harm,” according to The Independent, citing reporting first published by The Wall Street Journal.

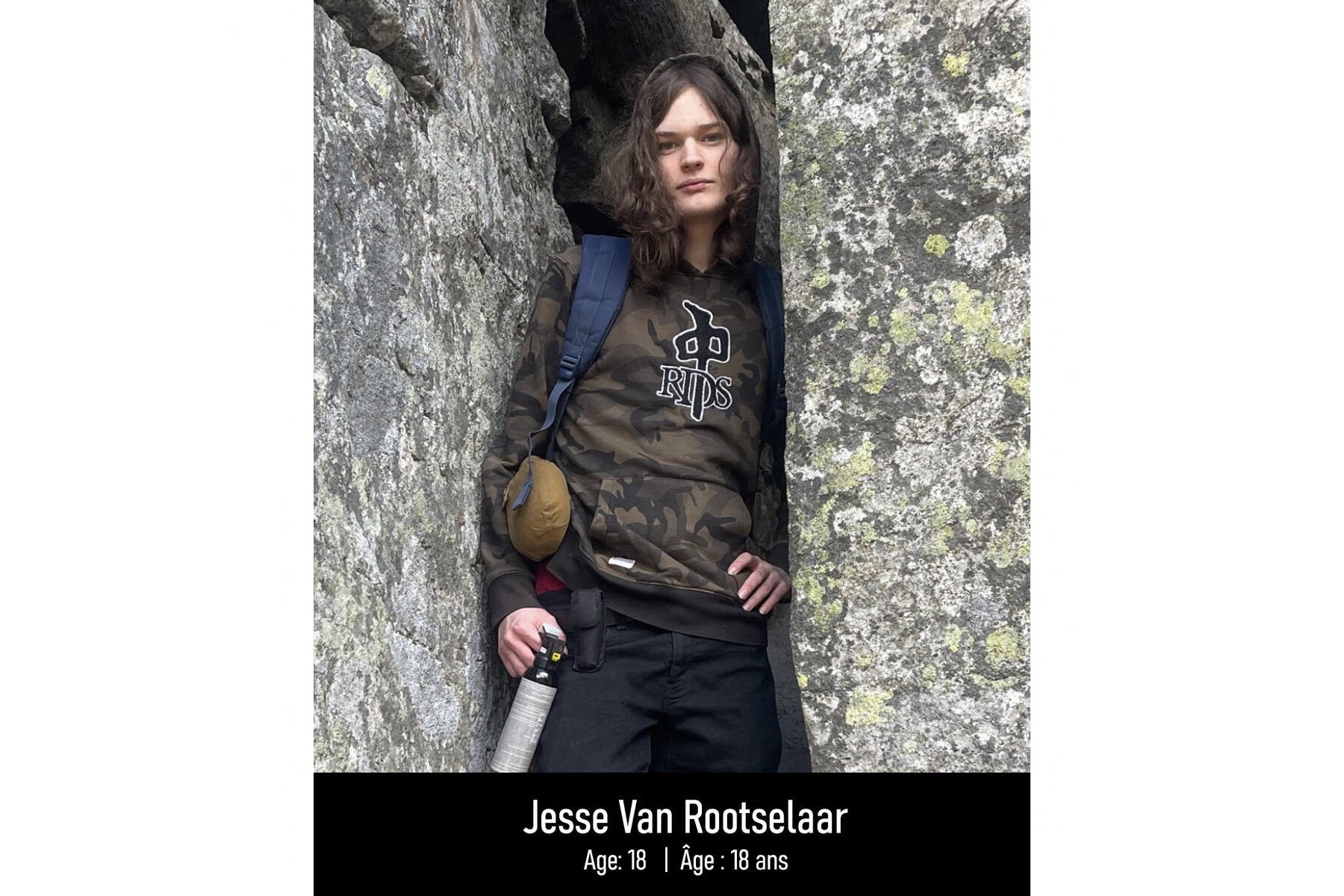

The suspect, identified as 18-year-old Jesse Van Rootselaar, is accused of killing family members and then carrying out a mass shooting at a school in Tumbler Ridge, British Columbia, before dying by suicide, The Independent reports. OpenAI says it banned the account in June 2025 for violating usage policy and later, after the attack, proactively contacted the Royal Canadian Mounted Police with information relevant to the investigation.

Fox News, also citing the Journal, adds that an automated review system flagged the interactions and that roughly a dozen employees were aware of concerning content—described as violent scenarios involving gun violence over multiple days—yet the company still did not contact police. OpenAI’s explanation is one in content moderation: over-reporting creates its own harms, and “being too trigger-happy” with law-enforcement referrals risks false accusations and privacy violations.

Technically, the episode exposes the hard boundary between “hazard” and “actionable risk.” A language model can generate or respond to violent hypotheticals for many reasons: fiction writing, venting, curiosity, role-play, or actual planning. Prompt logs are not intent detectors; they are text. Even if you add metadata (frequency, escalation, requests for operational detail), you still face base-rate math: true would-be attackers are rare, so a system tuned to catch them will drown in false positives unless it becomes extraordinarily intrusive.

That leaves OpenAI—an unelected private firm—trying to operate a de facto threat-assessment function with no warrant power, no due-process safeguards, and no democratic legitimacy. The company’s threshold (“imminent and credible”) sounds like a civil-liberties concession, but it also functions as liability management: refer too early and you become a privatized tip line that ruins lives; refer too late and you become the villain in the post-mortem.

A workable early-warning system would require two things that the public debate carefully avoids. First, validated performance metrics: false-positive and false-negative rates on realistic populations, not curated demos. Second, a clear governance model for when a private platform escalates to state coercion. Without those, “AI safety” becomes a marketing label for a pre-crime pipeline—implemented by corporate policy teams, audited by nobody, and justified after the fact.

The only way to make such systems reliably predictive may be to expand surveillance far beyond chat logs—linking identity, devices, location, purchases, and social graphs: to turn a chatbot into an informant, and the internet into a panopticon. At that point, the question is no longer whether the model can predict violence. It’s whether a free society should want it to.